On Tuesday, Elon Musk’s AI company xAI announced the beta release of two new language models, Grok-2 and Grok-2 mini, available to subscribers of his social media platform X (formerly Twitter). The models are also linked to the recently released Flux image synthesis model, which allows X users to create largely uncensored photorealistic images that can be shared on the site.

“Flux, accessible through Grok, is an excellent text-to-image generator, but it is also really good at creating fake photographs of real locations and people, and sending them right to Twitter,” wrote frequent AI commentator Ethan Mollick on X. “Does anyone know if they are watermarking these in any way? It would be a good idea.”

In a report posted earlier today, The Verge noted that Grok’s image generation capabilities appear to have minimal safeguards, allowing users to create potentially controversial content. According to their testing, Grok produced images depicting political figures in compromising situations, copyrighted characters, and scenes of violence when prompted.

The Verge found that while Grok claims to have certain limitations, such as avoiding pornographic or excessively violent content, these rules seem inconsistent in practice. Unlike other major AI image generators, Grok does not appear to refuse prompts involving real people or add identifying watermarks to its outputs.

Given what people are generating so far—including images of Donald Trump and Kamala Harris kissing or giving a thumbs-up on the way to the Twin Towers in an apparent 9/11 attack—the unrestricted outputs may not last for long. But then again, Elon Musk has made a big deal out of “freedom of speech” on his platform, so perhaps the capability will remain (until someone likely files a defamation or copyright suit).

People using Grok’s image generator to shock brings up an old question in AI at this point: Should misuse of an AI image generator be the responsibility of the person who creates the prompt, the organization that created the AI model, or the platform that hosts the images? So far, there is no clear consensus, and the situation has yet to be resolved legally, although a new proposed US law called the NO FAKES act would presumably hold X liable for the creation of realistic image deepfakes.

With Grok-2, the GPT-4 ceiling still holds

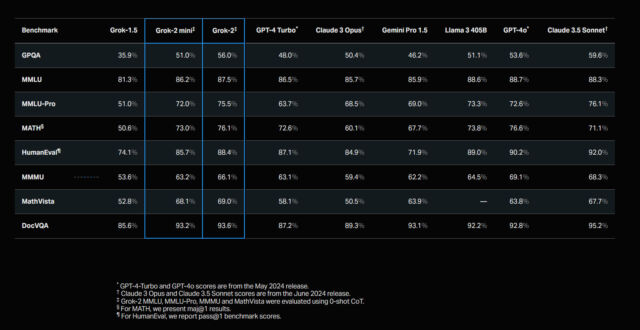

Looking beyond images, in a release blog, xAI claims that Grok-2 and Grok-2 mini represent significant advancements in capabilities, with Grok-2 supposedly outperforming some leading competitors in recent benchmarks and what we call “vibemarks.” It’s always wise to approach those claims with a dose of skepticism, but it appears that the “GPT-4 class” of AI language models (those with similar capability to OpenAI’s model) has grown larger, but the GPT-4 barrier has not yet been smashed.

“There are now five GPT-4 class models: GPT-4o, Claude 3.5, Gemini 1.5, Llama 3.1, and now Grok 2,” wrote Ethan Mollick on X. “All of the labs are saying there is room left for continued giant improvements, but we haven’t seen any models truly leap above GPT-4… yet.”

xAI says it recently introduced an early version of Grok-2 to the LMSYS Chatbot Arena under the name “sus-column-r,” where it reportedly achieved a higher overall Elo score than models like Claude 3.5 Sonnet and GPT-4 Turbo. Chatbot Arena is a popular subjective vibemarking website for AI models, but it’s been the subject of controversy recently when people disagreed with OpenAI’s GPT-4o mini placing so highly in the rankings.

According to xAI, both new Grok models show improvements over predecessor Grok-1.5 in areas like graduate-level science knowledge, general knowledge, and math problem-solving in benchmarks that have similarly proved controversial. The company also highlighted Grok-2’s performance on visual tasks, claiming state-of-the-art results in visual math reasoning and document-based question answering.

The models are now available to X Premium and Premium+ subscribers through an updated app interface. Unlike some of its competitors in the open weights space, xAI isn’t releasing the model weights for download or independent verification. This closed approach stands in stark contrast to recent moves by Meta, which recently released its Llama 3.1 405B model for anyone to download and run locally.

xAI plans to release both models through an enterprise API later this month. The company says this API will feature multi-region deployment options and security measures like mandatory multifactor authentication. Details on pricing, usage limits, or data handling policies have not yet been announced.

Photorealistic image generation aside, perhaps Grok-2’s biggest liability is its deep link to X, which gives it a tendency to pull inaccurate information from tweets. It’s a bit like if you had a friend who insisted on checking the social media site before answering any of your questions, even when it wasn’t particularly relevant.

As Mollick pointed out on X, this close link can be annoying: “I only have access to Grok 2 mini right now, and it seems like a solid model, but often seems ill-served by its RAG connection to Twitter,” he wrote. “The model is fed results from Twitter that seem irrelevant to the prompt, and then desperately tries to connect them into something coherent.”