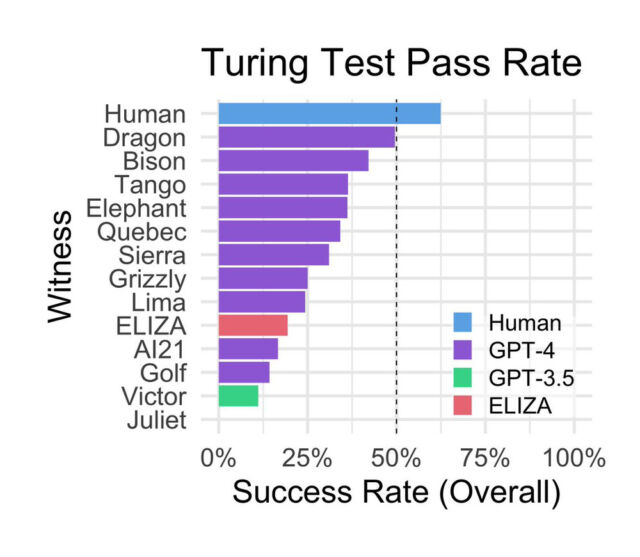

In a preprint research paper titled “Does GPT-4 Pass the Turing Test?”, two researchers from UC San Diego pitted OpenAI’s GPT-4 AI language model against human participants, GPT-3.5, and ELIZA to see which could trick participants into thinking it was human with the greatest success. But along the way, the study, which has not been peer-reviewed, found that human participants correctly identified other humans in only 63 percent of the interactions—and that a 1960s computer program surpassed the AI model that powers the free version of ChatGPT.

Even with limitations and caveats, which we’ll cover below, the paper presents a thought-provoking comparison between AI model approaches and raises further questions about using the Turing test to evaluate AI model performance.

British mathematician and computer scientist Alan Turing first conceived the Turing test as “The Imitation Game” in 1950. Since then, it has become a famous but controversial benchmark for determining a machine’s ability to imitate human conversation. In modern versions of the test, a human judge typically talks to either another human or a chatbot without knowing which is which. If the judge cannot reliably tell the chatbot from the human a certain percentage of the time, the chatbot is said to have passed the test. The threshold for passing the test is subjective, so there has never been a broad consensus on what would constitute a passing success rate.

In the recent study, listed on arXiv at the end of October, UC San Diego researchers Cameron Jones (a PhD student in Cognitive Science) and Benjamin Bergen (a professor in the university’s Department of Cognitive Science) set up a website called turingtest.live, where they hosted a two-player implementation of the Turing test over the Internet with the goal of seeing how well GPT-4, when prompted different ways, could convince people it was human.

Through the site, human interrogators interacted with various “AI witnesses” representing either other humans or AI models that included the aforementioned GPT-4, GPT-3.5, and ELIZA, a rules-based conversational program from the 1960s. “The two participants in human matches were randomly assigned to the interrogator and witness roles,” write the researchers. “Witnesses were instructed to convince the interrogator that they were human. Players matched with AI models were always interrogators.”

The experiment involved 652 participants who completed a total of 1,810 sessions, of which 1,405 games were analyzed after excluding certain scenarios like repeated AI games (leading to the expectation of AI model interactions when other humans weren’t online) or personal acquaintance between participants and witnesses, who were sometimes sitting in the same room.

Surprisingly, ELIZA, developed in the mid-1960s by computer scientist Joseph Weizenbaum at MIT, scored relatively well during the study, achieving a success rate of 27 percent. GPT-3.5, depending on the prompt, scored a 14 percent success rate, below ELIZA. GPT-4 achieved a success rate of 41 percent, second only to actual humans.

GPT-3.5, the base model behind the free version of ChatGPT, has been conditioned by OpenAI specifically not to present itself as a human, which may partially account for its poor performance. In a post on X, Princeton computer science professor Arvind Narayanan wrote, “Important context about the ‘ChatGPT doesn’t pass the Turing test’ paper. As always, testing behavior doesn’t tell us about capability.” In a reply, he continued, “ChatGPT is fine-tuned to have a formal tone, not express opinions, etc, which makes it less humanlike. The authors tried to change this with the prompt, but it has limits. The best way to pretend to be a human chatting is to fine-tune on human chat logs.”

Further, the authors speculate about the reasons for ELIZA’s relative success in the study:

“First, ELIZA’s responses tend to be conservative. While this generally leads to the impression of an uncooperative interlocutor, it prevents the system from providing explicit cues such as incorrect information or obscure knowledge. Second, ELIZA does not exhibit the kind of cues that interrogators have come to associate with assistant LLMs, such as being helpful, friendly, and verbose. Finally, some interrogators reported thinking that ELIZA was “too bad” to be a current AI model, and therefore was more likely to be a human intentionally being uncooperative.”

During the sessions, the most common strategies used by interrogators included small talk and questioning about knowledge and current events. More successful strategies involved speaking in a non-English language, inquiring about time or current events, and directly accusing the witness of being an AI model.

The participants made their judgments based on the responses they received. Interestingly, the study found that participants based their decisions primarily on linguistic style and socio-emotional traits, rather than the perception of intelligence alone. Participants noted when responses were too formal or informal, or when responses lacked individuality or seemed generic. The study also showed that participants’ education and familiarity with large language models (LLMs) did not significantly predict their success in detecting AI.

The study’s authors acknowledge the study’s limitations, including potential sample bias by recruiting from social media and the lack of incentives for participants, which may have led to some people not fulfilling the desired role. They also say their results (especially the performance of ELIZA) may support common criticisms of the Turing test as an inaccurate way to measure machine intelligence. “Nevertheless,” they write, “we argue that the test has ongoing relevance as a framework to measure fluent social interaction and deception, and for understanding human strategies to adapt to these devices.”